Search is no longer just about ranking on a list of ten blue links. In 2026, generative search engines like Google’s AI Overviews, ChatGPT search, Perplexity, and Claude are reshaping how users discover information, compare services, and make purchasing decisions. These AI-powered systems do not simply crawl and index your pages the way traditional bots do. They read, interpret, synthesize, and then cite your content inside AI-generated answers. If your CMS is not structured to serve these new AI crawlers and language models, your brand risks becoming invisible in the fastest-growing search channel of the decade.

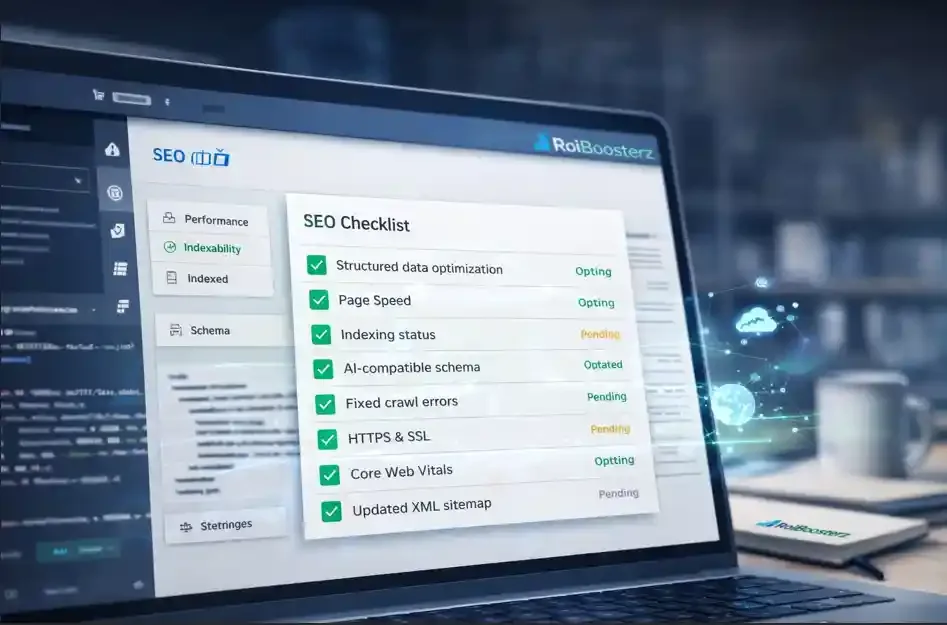

An AI SEO audit is no longer optional. It is the foundational step that determines whether your website can be understood, trusted, and cited by large language models. This technical SEO checklist walks you through every critical area your CMS needs to address, from structured data and entity clarity to site speed, crawl accessibility, and the emerging LLMs.txt standard.

Schema Markup for AI: Speaking the Language of Large Language Models

Traditional search engines used structured data to generate rich snippets. Generative search engines use it to understand the meaning behind your content. The difference matters. When a large language model encounters a page with well-implemented schema markup for AI, it can confidently extract entities, relationships, prices, reviews, FAQs, and service details without guessing. Without schema, the model has to infer meaning from unstructured text, which introduces ambiguity and reduces the likelihood that your content will be selected as a cited source.

Your CMS should support JSON-LD schema injection at the template level, not as an afterthought plugin that generates generic markup. Prioritize Organization, LocalBusiness, Service, Product, FAQPage, HowTo, and Article schema types. Nest them properly so that your Organization entity connects to your Service offerings, your Service pages reference your reviews, and your FAQ schema maps to the actual questions users ask in conversational AI queries. Every page on your site should carry at least one relevant schema type, and your homepage should serve as the root node of your entity graph.

Entity SEO: Building an Identity That AI Systems Can Trust

Generative engines do not rank pages. They rank entities. An entity is a clearly defined concept, brand, person, product, or place that a language model can recognize and associate with attributes and relationships. Entity SEO is the practice of making your brand’s identity unambiguous across every surface the model encounters: your website, your Google Business Profile, your social accounts, industry directories, and third-party citations.

Start by auditing your brand’s presence in knowledge panels, Wikipedia references, and Wikidata entries. Ensure that your CMS outputs consistent NAP (name, address, phone) data across every page. Use sameAs properties in your Organization schema to link your website to your LinkedIn, Crunchbase, and other authoritative profiles. The goal is to create a dense, interconnected web of references that tells every AI system exactly who you are, what you do, and why you are a credible source. If a language model cannot disambiguate your brand from a competitor with a similar name, you have an entity SEO problem that no amount of keyword optimization will solve.

LLMs.txt Implementation: The New Robots.txt for AI

Just as robots.txt told traditional crawlers which pages to access, LLMs.txt is emerging as the standard for communicating with AI crawlers. This file, placed at the root of your domain, provides large language models with a structured summary of your site’s purpose, key content areas, preferred citation formats, and access permissions. It is not yet universally adopted, but forward-thinking brands are implementing it now to gain an early advantage as AI search providers begin honoring these directives.

Your LLMs.txt file should include a concise description of your organization, a list of your most important content categories with URLs, and explicit instructions on how you want AI models to reference your brand. Think of it as your brand’s elevator pitch written specifically for a machine audience. If your CMS does not support custom root-level file deployment, work with your development team to add this capability. The implementation cost is minimal, but the strategic value of being one of the first brands in your industry to guide how AI systems interpret your content is significant.

The Technical SEO Checklist Every CMS Must Pass in 2026

A thorough technical SEO checklist for AI readiness goes beyond the basics of canonical tags and XML sitemaps. Your CMS needs to render content in a way that is immediately accessible to AI crawlers, many of which do not execute JavaScript the way Googlebot does. If your site relies heavily on client-side rendering, critical content may be invisible to models like GPTBot, ClaudeBot, or PerplexityBot. Server-side rendering or static generation should be the default for all content-critical pages.

Audit your site’s crawl logs to identify which AI bots are already visiting and how frequently. Check your robots.txt to ensure you are not inadvertently blocking these crawlers. Review your internal linking architecture to confirm that AI systems can follow logical paths from your homepage to your deepest service pages without hitting dead ends or orphan pages. Ensure your heading hierarchy is clean and semantic: H1 for the primary topic, H2 for major sections, H3 for supporting details. AI models rely heavily on heading structure to understand content organization and topical relevance.

Site Speed for AI Crawlers and Core Web Vitals 2026

Site speed for AI crawlers matters more than many SEO professionals realize. AI bots typically have stricter timeout thresholds than traditional search crawlers. If your server takes too long to respond, the bot moves on and your content never enters the model’s training or retrieval pipeline. A fast Time to First Byte, efficient server-side rendering, and lean page payloads are essential.

Google’s Core Web Vitals 2026 update has introduced Interaction to Next Paint as a stable metric replacing First Input Delay, and the thresholds have tightened. But beyond Google’s specific metrics, every generative search engine benefits from fast, stable, visually complete pages. Optimize your CMS by implementing aggressive image compression, lazy loading below the fold, minimal render-blocking resources, and edge caching through a CDN. Compress your HTML output and eliminate unnecessary third-party scripts that add latency. The performance gains compound: faster pages improve your traditional rankings, your AI crawl coverage, your user experience, and your conversion rates simultaneously.

Optimizing for AI Overviews and Generative Search Citations

Appearing in an AI Overview or being cited by a conversational search engine requires content that is concise, authoritative, and directly answers specific questions. Structure your content so that each section delivers a clear, self-contained answer within the first two to three sentences, followed by supporting detail. This “inverted pyramid” approach mirrors how AI models extract and attribute information

Implement FAQ sections on your key service and product pages using FAQPage schema. Write answers that are conversational yet precise, between forty and sixty words, because this is the typical length AI systems pull for generated responses. Use tables, comparison formats, and definition-style content where appropriate, as these structured formats are disproportionately favored by AI citation algorithms. Every page should include a clear, schema-marked question-and-answer pair that addresses the single most common query related to that page’s topic.

Turning AI Visibility into Revenue: Conversion Rate Optimization

Technical SEO readiness only matters if the traffic it generates converts. When users arrive at your site from an AI-generated citation, they have already been primed with context about your brand and offering. They are further along in the decision-making process than a typical organic visitor, which means your landing pages need to meet them with clear calls to action, streamlined forms, and trust signals that reinforce the authority the AI system already attributed to you.

Audit your top landing pages for CRO fundamentals: a single, prominent call to action above the fold; social proof such as testimonials, client logos, or case study links nearby; and a page layout that eliminates friction between arrival and conversion. If your CMS supports dynamic content blocks, personalize the experience based on traffic source or query intent. The brands that win in AI search are not just visible. They convert that visibility into a pipeline.

Ready to Make Your Website AI-Search Ready?

Our team specializes in exactly this kind of work. Explore our Technical SEO Services to see how we can audit, optimize, and future-proof your CMS for generative search in 2026 and beyond.

Schedule Your AI SEO AuditFrequently Asked Questions

An AI SEO audit evaluates your website’s readiness for generative search engines, not just traditional crawlers. It examines structured data quality, entity clarity, LLMs.txt implementation, AI crawler accessibility, and content structure optimized for citation by large language models. A traditional audit focuses primarily on indexation, rankings, and Google-specific signals, while an AI audit ensures your content can be read, understood, and cited by systems like Google AI Overviews, ChatGPT, and Perplexity.

LLMs.txt is an emerging standard file placed at your domain’s root that tells AI crawlers about your site’s purpose, key content areas, and preferred citation format. While not yet universally required, implementing it now positions your brand ahead of competitors as AI search providers increasingly adopt these directives. The implementation is simple and low-cost, making it a high-value, low-risk addition to any technical SEO strategy.

Schema markup gives AI systems structured, machine-readable context about your content. Instead of guessing what your page is about, a language model can directly extract entities, services, pricing, reviews, and FAQs from your schema. This increases the probability that your content will be selected and cited in AI-generated answers. Prioritize FAQPage, Organization, Service, and Article schema types for the highest impact.

Yes. AI crawlers like GPTBot, ClaudeBot, and PerplexityBot often have stricter timeout limits than traditional search engine bots. If your server responds slowly, these crawlers will skip your pages entirely, meaning your content never enters the AI model’s retrieval pipeline. Fast Time to First Byte, server-side rendering, and lean page payloads are critical for AI crawl coverage.

Given how rapidly AI search is evolving, we recommend running a comprehensive AI SEO audit quarterly and monitoring AI crawl logs monthly. Major CMS updates, schema changes, or new AI search features from Google or other providers should trigger an immediate review. Staying proactive is essential because the brands that adapt fastest will capture the most visibility as generative search adoption accelerates through 2026 and beyond.

Do not wait for your competitors to figure this out first

Schedule a free AI SEO audit consultation with ROI Boosterz today and take control of your visibility in the new era of search.